Everytime I read an article about next-gen hardware, the computer scientist inside me vomits. I hate it how all these “video games journalists”, usually under-educated and with little-to-none research, speak and compare hardware and software without having a single clue what they are saying.

This blog post will be technical, maybe not as much as a Systems Architecture 101 module at University, but will try to keep it technical. If you want one of those “It has 8Gbs, thus can store many things at once” articles, please stop reading. If you don’t like it when someone “bash” your favourite console, please stop reading. If you want to learn a few things about how hardware really works and what really matters in it, continue…

Architecture, what is it?

First a quick introduction to X86: Established by Intel in 1978 as a 16-bit instruction set architecture and “re-invented” (again by Intel) in 85, with 32-bit support, X86 is the dominant system architecture. The architecture went under another major change in 2003, with AMD releasing “X86-64”, or as AMD calls it: AMD64 architecture. AMD64 is also known in Windows world as: X64 (other OSes uses different names).

The idea behind AMD64 is simple: 32-bit CPUs started to limit the virtual address space –will call it, incorrectly, for non-technical people convenience: RAM memory. However by creating a new architecture, like Intel was planning to do would limit legacy code support. AMD created a X86 processor with extra registries (8 new registries) and extended the existing general-purpose, arithmetic, logical operation, memory-to-registry and registry-to-memory registries from 32-Bit to 64-Bit. This ensured 16/32-bit code support, but also the ability to run code in 64-bit using “long mode”. Intel eventually implemented AMD’s solution and X86-64 became the “golden standard”.

Over the years, we saw changes such as the memory controller to be integrated into the CPU and most recently even the GPU. AMD innovated with their “APUs” (Accelerated Processing Units), by combining CPU and GPU into a single die, with functions such as GP-GPU computing. This Heterogeneous System Architecture (HSA) AMD APUs’ got, allows the GPU to access low level hardware in the same way as the CPU does. The most important HSA feature for the next generation is the Unified Address Space, which allows the CPU and GPU to access the same pool. I will get back to this later.

As both PS4 and XBOne uses an AMD’s APU based on Jaguar microarchitecture, they implement HSA architecture, while retaining an X86-64 (or X86 in short) architecture for the CPU –or in more details: an X86 instruction set for CPU.

Due to the HSA architecture and having both the CPU and GPU on the same die, bottlenecks of the more “traditional” X86 architecture –called von Neumann architecture, are decreased, if not eliminated. I do not personally believe we can directly compare a PC with any of the two consoles in terms of “raw power” in papers at least. Having an instant communication with the CPU and GPU, allowing the GPU to access all the “goodies” the CPU can, is going to make game engine developers feel like kids in a candy store. In the PC world, nVidia tries to push GP-GPU computing with their CUDA framework, while Intel –who doesn’t want to CPUs to become obsolete- tries to enforce the CPU, limiting the GPU to graphics/ physics processing. AMD APUs, simply offer best of both worlds. Sure, there are disadvantages, especially in the modularity of the system, but still for that is worth: Amazing job AMD!

Memory, the marketing hype and the real advantages

“It has 8GBs!!!! GDDR5 RAM!!!” The line that dominated PS4 articles after the reveal of the console and numerous sites, desperate for some traffic started talking how great it or how it will compare with XBOne’s DDR3 RAM, then articles how PS4 uses 3.5GBs RAM for OS or XBOne’s eSRAM will provide similar spawned like Zerg hatchlings all over the net. On top of that, you get PC gamers saying how their PCs got 8GBs for some years now.

Let’s start with the capacity first. As I discussed above, X86-64 supports more than 4GBs RAM and indeed PCs have 8GBs as their “standard” for some years now –my current laptop has 16GBs! However I am running Virtual Machines, remote connectors and other memory-savvy tasks. Is there really a benefit for gaming from 8GBs or more? Yes and no.

PCs got two types of memory: your well-known RAM and vRAM. RAM is the temporary memory pool for the CPU –often called: System memory- and vRAM is for the GPU. vRAM is much simpler than RAM, as it can only hold: shader programs, vertex buffers, index buffers and textures (the largest files out of the four). The only thing RAM can’t do is upload data directly into the GPU, aka serve as a memory pool for the GPU. This is why you load graphics-related assets to the RAM and then COPY them to the vRAM. Now during this copying, you get one of the biggest performance bottlenecks in PC gaming. Not only copying takes time, but also temporarily –assuming the developer knows how to proper garbage cleaning of unused resources- the same data are in both the RAM and vRAM. A bigger RAM pool, means you can put data the GPU currently needs, but also the ones it may need or data accessed frequently by the GPU. This is done as GPU memory tends to be much smaller ~1GB. Currently 1-2GBs of vRAM are great! As the GPU pulls and process data fast enough, reducing the need to store data for a long period of time (some ms). A rule of thump is: if you play in extreme resolutions (like having 3-4 monitors at 1080p each), you need bigger textures, thus more vRAM. Moreover more vRAM helps devs to be more “loose”.

A quick, muuuch simplified and a tad inaccurate, “data flow” how a texture/shade is processed:

1) Game asks the shading framework (e.g. OpenGL).

2) The framework requests it from the drivers of the framework. The drivers sit in OS level.

3) OS asks the CPU, CPU responds instantly and asks HDD.

4) HDD gets the texture, sends it to the CPU, the CPU allocates it to RAM.

5) GPU alerts CPU that wants the texture and CPU copies it from RAM to vRAM.

6) GPU retrieves the data from vRAM and processes them.

7) Processed data are outputted to the user, alerting the game that data were outputted.

8) Game asks CPU to delete RAM data.

Now –hopefully- can see why games such The Last of Us had amazing graphics, while PS3 only had 256MBs of RAM… and why Bethesda are terrible devs, when it comes to porting a game from one console to another… ;-)

In a PC, 8GBs are “needed” for Full HD, as in a PC OS, frameworks, etc etc run on the background. In a console, things such as the shading framework are embedded to the OS. Even if PS4 OS uses 3,5GBs, the remaining are more than plenty for games! As long as the devs don’t rush (much) with their code.

How about the speed of RAM? I can recall a few years ago, I had to make a choice: DDR2 or DDR3 for my desktop. I went with the first one. Not only it was cheaper, but back then the performance was identically –if not, at some instances better on the DDR2. Why? DDR2 back then had a lower CAS latency. CAS is, in simple words, the delay between the moment the memory controller asks RAM to get some data and the data are available to be retrieved. Nowadays CAS in DDR3 is as low –if not lower- than DDR2 CAS. Still in real-world: 1-2 FPS gain is not a deal breaker, is it?

What about GDDR5? Compare to DDR3? GDDR5 is actually DDR3, just with an 8-bit buffer! The G in GDDR5 stands for Graphics, as this type of memory is used exclusively for graphics cards.

Wait, exclusively for graphic cards?! Then how is PS4 using it? PS4 as I said above, uses an APU with HSA. This allows to bypass the biggest bottleneck in computer graphics: copying the textures from RAM to vRAM. PS4, similar to XBOne will use ONE memory pool for both system and video. The idea behind HAS and how it handles memory: Why copy the data, which maybe MBs –if not GBs- big, when you can just copy a pointer that shows where the data is? That simple approach, which unfortunately can’t be implemented in PCs, due to Intel acting like a child and enforcing an architecture they shamelessly copied from AMD. This simple key point, in the way how XBOne and PS4 works, give them a huge performance boost.

Hey! All that sounds cool, but you didn’t answer: GDDR5, is it worth it? Yes it does. The 8-bit buffer, alongside with the HSA approach to memory, in the hands of proper developers will do marvels!

What about XBOne? XBOne performance in this area is harder to guess. The eDRAM approach could “theoretically” give similar or greater performance than the PS4. In practise however, it all depends on XBOne’s OS - the way it will handle data in eDRAM and the actual devs, if they are using or not DirectX 11.2. Personally I believe XBOne is a little bit behind from PS4 in this area, at least for multi-plats.

Programming language, matters more than you know

The X86 architecture was heavily marketed by both Sony and Microsoft. However media went even further, making the whole process of porting to be as simple as “pressing a Save As” button. Is it? No. Windows, Linux and Mac computers now all uses X86 processors, but do you see the same performance and instant porting of apps from one platform to another? No. Why?

Each of those OSes handles hardware resources, slightly differently, but also allows access to programmers to different OS-level resources, different compilers and sometimes you get to use different Integrated Developer Environments (IDEs) –a software used by developers to write, compile, test code.

For example, Microsoft since 95, using Visual Studio IDE tried to lure developers into Windows application development. With Visual Studio, MS released their own compilers (Visual C/ C++), with direct access to Windows’ core resources, locking developers into Windows platform -a really smart-move in my opinion. Long story short, the easiness of MS’ tools locked down applications into Windows. In 2000 Microsoft tried to introduce C#, a programming language similar to C++ with a Java skin on-top of it. Unfortunately C# didn’t manage to attract much attention until 2004-05, thanks to XNA Framework built on-top of it. C# alongside with the effective XNA framework made games development for 360 attractive, multiple indies started to use it to develop and port games to 360. In fact studios went further, sharing some of the Visual C# libraries between their PC and 360 versions of their games. Unfortunately MS discontinued XNA, in favour of another to-be-announced framework… Moreover their primary “graphics rendering” framework is DirectX 11 (with exclusive 11.2 support).

Sony on the other hand, as Cell was co-developed with IBM, allowed you to use their own (from what I heard: awful) SDK or IBM’s Cell SDK. The second one, as far as I am aware was using OpenCl. OpenCL is an open framework developed originally by Apple, for Parallel Programming. This lack of familiarity with the SDKs and frameworks, alongside with other factors, made the first two years a nightmare for devs and gave PS owners lots of crappy ports. PS4 however promises to change all that; Sony shipped their SDKs early and best of all: Uses OpenGL 4.0 AND DirectX 11 (but not 11.2) within their “Playstation Shader Lanuage”. While Sony created again a new shader languae for their graphics rendering, it is built on-top of the two most used Shader languages. OpenGL is essentially the bread and butter of any Computer Science student with a 3D-graphics or games development programming module and DirectX is just one of those few Microsoft industrial standards that are actually standards. This versallity in PS4 programming will defienetly attract indies. Especially since indies like using OpenSource, multi-plat, standards like OpenGL 4.0.

What about performance? Here XBOne is the winner. DirectX 11.2 allows devs who actually implement it, to achieve amazing results, using much less resources. For more info have a look at this:

http://www.youtube.com/watc...

XBOne victory is a small one; in multiplat games, where devs will be reusing code and it is less likely invest into implementing 11.2, will find both consoles equal. Moreover between DirectX 11.0/11.1 and OpenGL4.0, OpenGL performs a tad better.

Final thoughts

The purpose of this article was to throw some light how the new consoles actually work and why we shouldn’t compare them to PCs. Unfortunately we have no real idea of their performances, especially when devs such as Naughty Dogs create optimised games for them. At the moment their sheer power, even in the hands of inexperience devs will produce almost equal to a high-end PC results.

As for which console is the best, again we can’t judge from pure hardware comparisons. Their performance differences will mainly be judged by how much time devs will dedicated to each console. PS4 out of the box, has a small advantage in multiplat; but even that advantage won’t be visible until much later.

I will close this blog entry recommending people to get whatever their friends will do or has franchises they prefer. I personally just got a new high-end laptop, as I personally prefer PC gaming for multiplats and most likely will get an XBOne for Halo (most of my friends play Halo) this year and a PS4 whenever The Order 1986 comes out.

If you have any technical questions or want me to clarify something please just ask! Apologies for the poor usage of the English language, as it is only my second language.

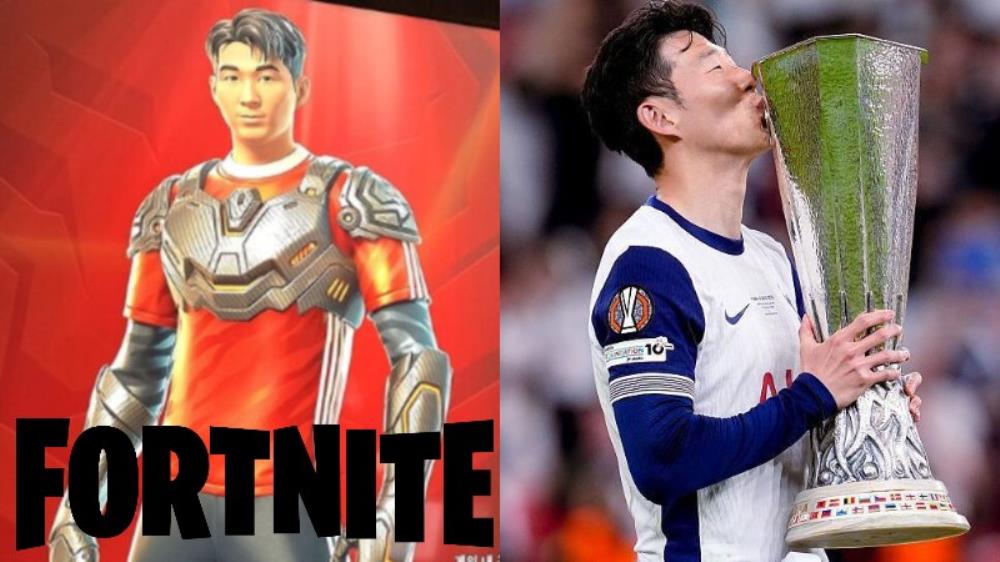

Son Heung-min Fortnite collaboration confirmed for June 21 with exclusive skin and Snap Ceremony emote bundle.

The Nintendo Switch 2 may receive support for docked mode 4K and 120 Hz output at some point in the future.

hopefully VRR via a firmware update is released for docked play as well (like the PS5 did post release)

Nintendo Switch 2 breaks US launch sales record, selling over 1.1 million units in its first week. This breaks the record set by PS4.

I'm happy for Nintendo. Even though the Switch 2 has received so much hate just to bring it down, it hasn't won. Even though some are saying they'll boycott Nintendo, it hasn't affected sales. Especially now, with word of mouth that the system is good, demand for the Switch 2 will definitely increase more.

But But the internet told me the switch 2 was going to be a failure.

Such a good system im having a blast with it and really enjoying my first ever playthrough of yakuza 0

Sold through or to the stores? Asking because they're tons of them at my local Best Buy. When the PS5 and Series consoles launched, you couldn't find them anywhere.

Good for them, I'm still not buying.

Looks to be a pretty safe and boring upgrade over Switch 1 which I don't think they can top it on how plain looking it is design wise.

Shame since Switch Lite showed they still know how to make a handheld that looks good and is actually portable in a classic Nintendo sense.

That's why I got one over Switch 2's block of charcoal.

Then there's the prices of it all and the whole Key card thing destroyed any sense of game ownership.

I just want to check for my blog, no currently released PC has hUMA?

I thought the Xbone ran two RAM pools. One being DDR3 and the other being eSRAM.

You also claim DX11.2 provides tiled resources as a win for MS when PRT (the hardware implementation thereof from AMD) was created using an OpenGL extension. I'm a little confused. How is it a win for MS when the OpenGL API is the most efficient (by design) way to use the hardware? TR is little more than MS attempting to "borrow" what already exists from an open source API in order to compete.

This is a great blog.

Thanks for taking the time to write it and the very detailed explanation's in the comments above.

I'm pretty sure no one of these fanboys know squat about the technical stuff both systems have, well, you're not a dveloper.

The media just throws them a bone and they fight over it. Thats the fanboy mentality over on N4G.

Wow first blog I ever read throughout on here..

I'm no programmer but I feel like optimizing code to take advantage of any hardware is what separates the different tiers of Devs on any platform.

You already know a talented dev studio is is going to have a polished game that makes use of the given hardware given the right financial backing, support and talent. That is why we have devs like Naugthy Dog..Crytek..etc

That is why I never tend to compare first-party game titles "technically" across different systems because "not all devs created equal within their respected platform choice". One dev can push the platform while the other might not be on the same level. Again, financial backing, support and talent goes a long way.

After reading your blog, I have a better understanding on what these consoles are pushing for and how the "tools" being used, will be equally be as "important" as the hardware and the devs that use them.